AI Video Weekly Roundup — April 17, 2026

Alibaba dominated the week — not with one announcement, but three distinct AI video and world-modeling products from three different business units. Meanwhile, the open-source tier gained a serious new contender in SkyReels V4, and xAI signaled that Grok Imagine’s 720p ceiling is about to go away.

Models covered: HappyHorse · Wan · SkyReels · Grok Imagine

🦪 Alibaba Launches Happy Oyster — A World Model That Builds Playable 3D Environments

On April 16, Alibaba released Happy Oyster, a new “world model” capable of generating interactive 3D environments from text prompts. Unlike the company’s video generation models, Happy Oyster doesn’t produce flat clips — it creates navigable, physics-aware 3D worlds that users can explore in real time.

The model was developed by Alibaba Token Hub (ATH), the same business unit behind HappyHorse-1.0. Happy Oyster is built on a native multi-modal architecture supporting two core interaction modes: Directing, which lets users build and modify a physical world on the fly, and Wandering, which places the user inside an endlessly expanding first-person environment generated from a single prompt. Both modes run in real time and simulate real-world physics.

The timing is deliberate. OpenAI cited “world simulation for robotics” as the reason for shutting down Sora — and here’s Alibaba shipping a world simulation product weeks later. Tencent’s Humayun HY-World 2.0 is also in this space, reconstructing 3D worlds from video clips. Happy Oyster takes a different approach: generating them from scratch.

Currently, Happy Oyster is available only to a limited number of users via early access. No pricing or general availability timeline has been announced. The target applications are gaming, film content production, and VR experiences.

Why it matters: This is the clearest signal yet that the next frontier in AI video is not longer or higher-resolution clips — it’s interactive 3D worlds. Alibaba now has three distinct AI video/media products in the field: Wan (open-source video generation), HappyHorse (benchmark-leading quality), and Happy Oyster (world modeling). That’s a broader portfolio than any other company in the space.

🧠 Wan 2.7 Brings “Thinking Mode” to Open-Source Video Generation

Alibaba’s Tongyi Lab released Wan 2.7 Video on April 3, a major upgrade to its open-source video model line that introduces a chain-of-thought reasoning approach to video generation. The “Thinking Mode” has the model first analyze the prompt, plan composition and scene logic, then generate — producing noticeably more coherent output with fewer artifacts than the standard single-pass approach.

Wan 2.7 Video ships with five distinct task types: text-to-video, image-to-video (supporting first-frame, first-and-last-frame, and audio-driven inputs), video continuation with text guidance, reference-to-video with up to five real-person inputs, and video editing via text, reference images, or style transfer. The model generates at up to 1080p resolution in 2–15 second clips.

ComfyUI added Wan 2.7 support the same day in version 0.18.5, with workflow templates for all five task types. Desktop and Comfy Cloud support are listed as coming soon. The model is also available through Alibaba Cloud’s Model Studio and the official Wan website.

Wan 2.7 Image-Pro, released alongside the video model, outputs at 4K with its own built-in chain-of-thought reasoning mode — the first image model to ship with this capability.

Why it matters: Thinking Mode is a genuinely new capability in video generation. The idea of a model planning before it renders — analogous to how reasoning models like o1 improved text generation by “thinking first” — could become standard across the field if the quality gains hold up in production use. For the open-source community, Wan 2.7’s five unified task types in a single model represents a significant leap in flexibility from the Wan 2.2 line.

🎬 SkyReels V4: Open-Source Audio-Video Generation Arrives

SkyReels V4, announced April 3, is the first open-source model to co-generate video and synchronized audio in a single forward pass. The model uses a dual-stream Multimodal Diffusion Transformer (MMDiT) architecture — one branch synthesizes video, the other generates temporally aligned audio — producing 1080p video at 32 FPS with clips up to 15 seconds.

The model accepts multi-modal inputs including text, images, video clips, masks, and audio references, making it viable for editing and inpainting workflows alongside pure generation. SkyReels V4 has already climbed to a strong position on the Artificial Analysis leaderboards, ranking among the top models in the text-to-video with audio category with an Elo score near 1,135.

A free tier is available on the SkyReels platform with 70 monthly credits. The model weights are open-source for local deployment.

Why it matters: Joint audio-video generation has been a defining feature of commercial models like Seedance 2.0, Veo 3.1, and Grok Imagine — but until now, no open-source model offered it. SkyReels V4 closes that gap. For creators running local workflows or building custom pipelines, native audio sync without a separate post-processing step is a meaningful workflow improvement.

📺 Grok Imagine Adds Quality Mode, Confirms 1080p Pro Tier for Late April

xAI rolled out two new generation modes for Grok Imagine in early April: Quality mode, which produces four high-detail images or video clips per generation, and Speed mode, which preserves the platform’s signature fast iteration. The update simplifies the interface to a single toggle between the two approaches.

More significantly, Elon Musk confirmed that Grok Imagine Pro — a full 1080p upgrade for both images and video — will launch later this month. Pro mode will be available to SuperGrok subscribers. If delivered, this eliminates Grok Imagine’s most significant competitive weakness: the 720p resolution ceiling that has kept it below every other major commercial model on output quality despite having the cheapest API pricing in the field.

The Pro tier reportedly runs on an upgraded version of xAI’s Aurora autoregressive mixture-of-experts (MoE) engine, with improved temporal coherence and KV-cache optimization enabling native audio synchronization at the higher resolution.

Why it matters: At 720p, Grok Imagine was positioned as the budget option — fast and cheap, but visibly lower quality. At 1080p with the existing $4.20/min API pricing, it becomes a legitimate mid-tier competitor. The question is whether xAI will maintain that pricing at the higher resolution or introduce a premium tier. Either way, the 500M+ X user distribution advantage gets more meaningful when the output quality matches the reach.

📈 By the Numbers

- 3 models — Alibaba AI video/media products now in the field from separate teams: Wan (Tongyi Lab), HappyHorse (ATH), Happy Oyster (ATH)

- 5 task types — Wan 2.7 Video’s unified capabilities: T2V, I2V, video continuation, reference-to-video, and video editing

- 32 FPS — SkyReels V4’s frame rate at 1080p — the highest among open-source video models with native audio

- 1080p — Grok Imagine Pro’s confirmed resolution upgrade, eliminating the 720p ceiling

- Elo 1,361 — HappyHorse-1.0’s updated T2V (no audio) score on Artificial Analysis, up from 1,333 at reveal

- 70 — Free monthly credits on SkyReels V4 platform for new users

🔮 What to Watch Next Week

- Grok Imagine Pro launch — Musk said “later this month.” If it ships next week with 1080p at the current $4.20/min API rate, every mid-tier model will need to respond

- HappyHorse weights release — Still listed as “coming soon” since April 10. The open-source community is watching closely; any release immediately reshapes the local generation landscape

- TAKE IT DOWN Act platform compliance — May 19 deadline is now 32 days away. Expect platform policy announcements from major hosts as the date approaches

- Sora app shutdown — April 26 is nine days out. Users have until then to export content from the Sora web interface

For full specs, pricing, and access details on every model covered this week, see the AI Video Generation Tools 2026 reference page — updated every Friday.

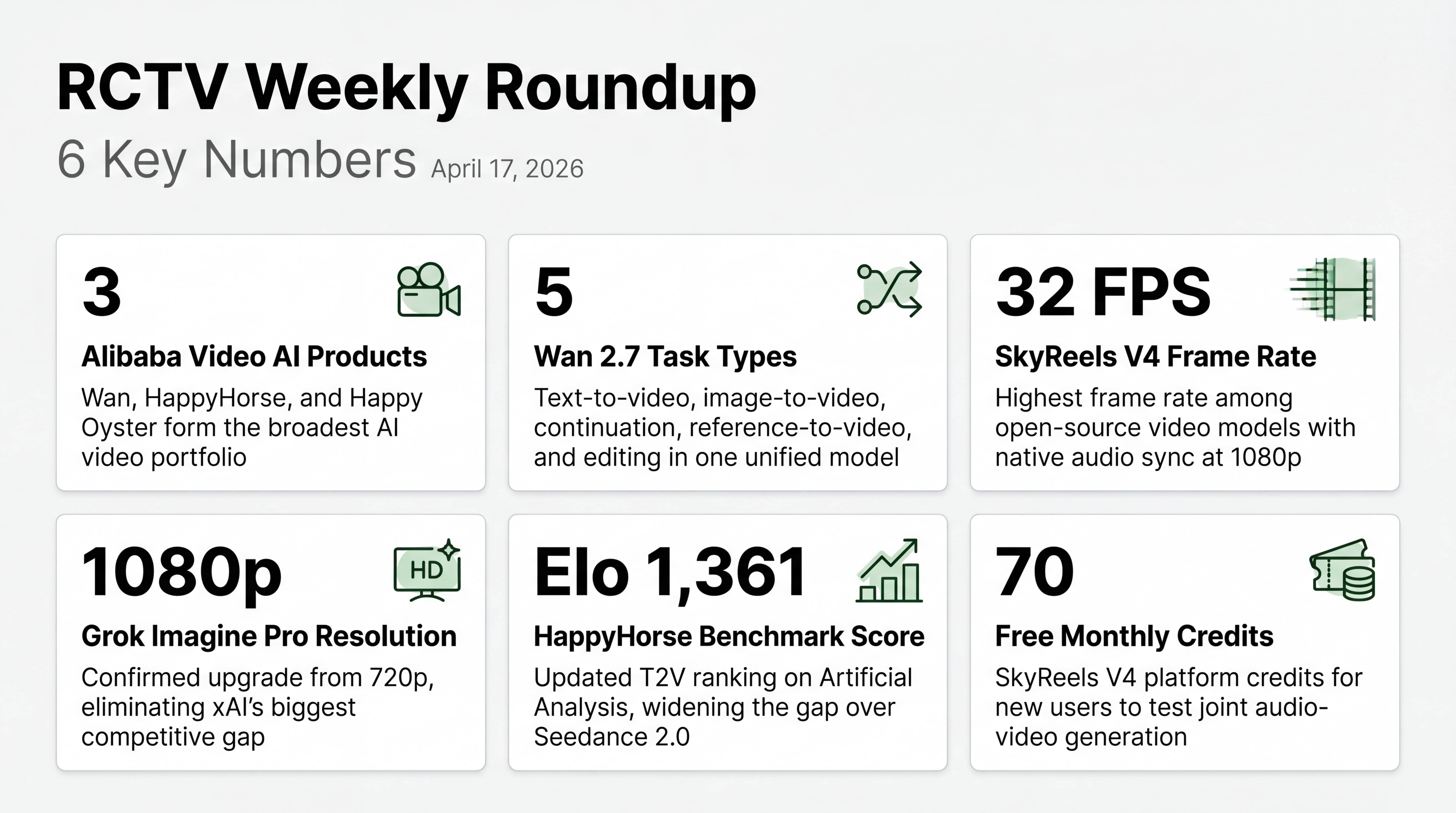

INFOGRAPHIC DATA

- 3 — Alibaba Video AI Products — Wan, HappyHorse, and Happy Oyster now form the broadest AI video portfolio from any single company

- 5 — Wan 2.7 Task Types — Text-to-video, image-to-video, continuation, reference-to-video, and editing in one unified model

- 32 FPS — SkyReels V4 Frame Rate — Highest frame rate among open-source video models with native audio sync at 1080p

- 1080p — Grok Imagine Pro Resolution — Confirmed upgrade from 720p, eliminating xAI’s biggest competitive gap

- Elo 1,361 — HappyHorse Benchmark Score — Updated T2V ranking on Artificial Analysis, widening the gap over Seedance 2.0

- 70 — Free Monthly Credits — SkyReels V4 platform credits for new users to test joint audio-video generation